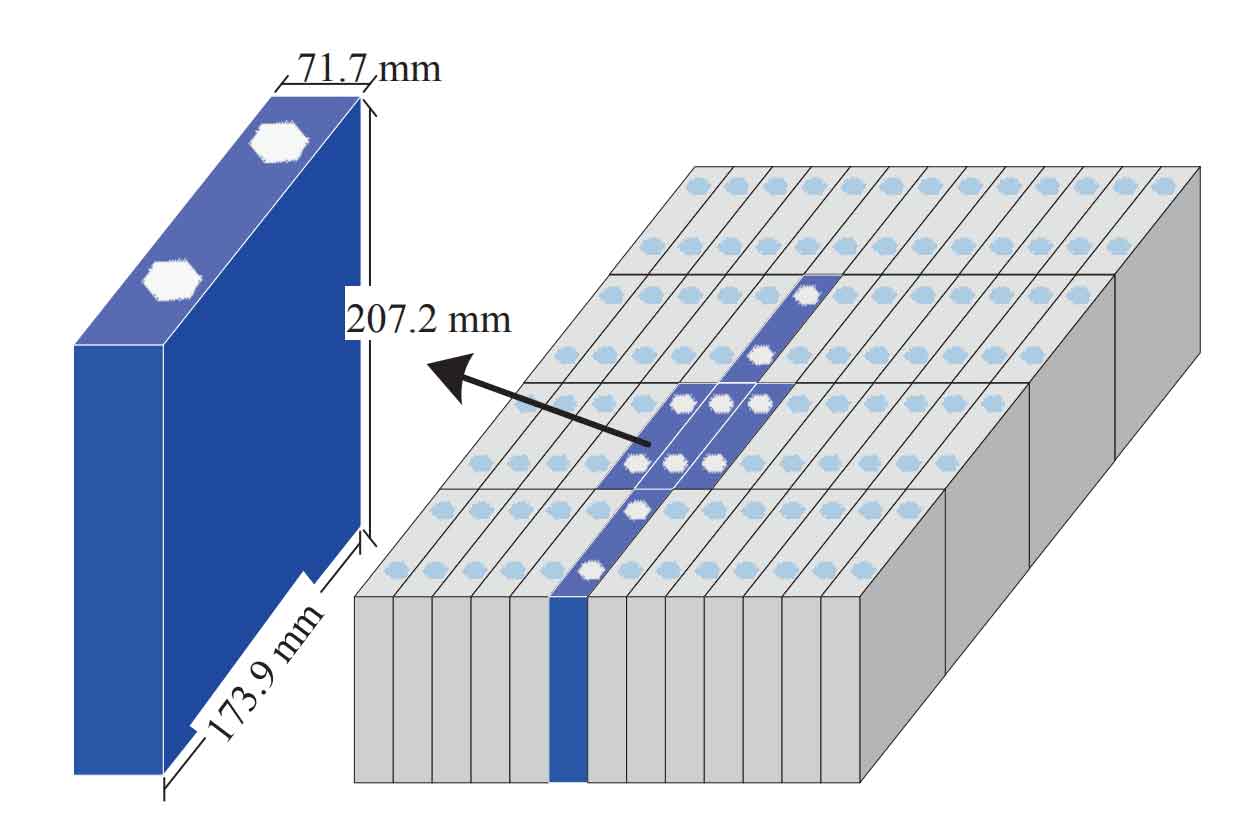

The integration of high shares of renewable energy and the formation of complex ultra-high voltage AC/DC hybrid grids present formidable challenges to power system frequency stability. Relying solely on the inertial response and governor action of traditional synchronous generators is becoming insufficient to ensure satisfactory primary frequency control (PFC) performance. Concurrently, the rapid advancement of flexible resources, such as cell energy storage systems (CESS), offers new, potent solutions. CESS possesses distinct advantages including high power density, instantaneous and precise response capability, and high reliability, making it an ideal candidate for providing fast frequency regulation services. Consequently, establishing a market mechanism for PFC services to incentivize the participation of these flexible resources is an inevitable trend for ensuring grid stability and efficiency.

When a cell energy storage system is committed to providing PFC services based on a market contract, it must linearly respond to frequency deviations outside a defined deadband, similar to a traditional generator governor. However, unlike conventional generators with ample fuel, a cell energy storage system has finite energy storage capacity. To maintain adequate bidirectional (charging and discharging) regulation headroom for future frequency events, it must perform energy management—actively adjusting its state of charge (SOC)—during periods when the frequency is within the deadband and no service is required. The core problem lies in making these energy management decisions sequentially over time without perfect knowledge of future frequency regulation demands. An overly conservative strategy may fail to maintain sufficient regulation margin, leading to performance penalties, while an overly aggressive one may incur excessive operational costs from frequent charging/discharging and accelerate battery degradation, ultimately diminishing the lifetime economic return. Therefore, designing an optimal energy management strategy that dynamically balances service revenue, energy trading costs, performance penalties, and battery aging costs is paramount for the profitable operation of a cell energy storage system in PFC markets.

This sequential decision-making problem under uncertainty is quintessentially a controlled stochastic process. I reveal that the optimal energy management strategy for a cell energy storage system participating in PFC can be effectively formulated and solved within the framework of a Markov Decision Process (MDP). The goal is to determine a policy that maximizes the total expected economic benefit over the entire operational lifecycle of the cell energy storage system, from its initial capacity to its end-of-life capacity.

Problem Formulation and System Modeling

The operational timeline is divided into distinct stages, demarcated by the instants when the system frequency crosses the deadband threshold. Each stage k is characterized by its initial state. The state must encapsulate the necessary information for decision-making: the current frequency regulation demand mode, and the status of the cell energy storage system itself.

The frequency regulation demand signal for the cell energy storage system, derived from the local frequency measurement and a droop characteristic, can be modeled as a continuous-time Markov chain with three states: Frequency High (demanding charge, denoted as ζ=1), Frequency Low (demanding discharge, ζ=-1), and Frequency Normal/Deadband (no demand, ζ=0). To capture temporal correlations more accurately than a simple first-order model, a second-order Markov chain is employed where the probability of the next state depends on the current and the previous state:

$$p\{\zeta_{k+1} | \zeta_k, \zeta_{k-1}, …, \zeta_1\} = p\{\zeta_{k+1} | \zeta_k, \zeta_{k-1}\}$$

The sojourn time in the Frequency Normal state is modeled as an exponential distribution with rate parameter ν₀. For the frequency deviation states, the required regulation energy e is approximately linearly related to the duration, and its distribution is modeled accordingly (e.g., an exponential distribution for the absolute value of energy).

The cell energy storage system’s state is defined by its effective energy capacity sk and its current energy content (or SOC) bk. The capacity degrades over time due to cycling. Instead of complex electrochemical models, the concept of Lifecycle Energy Throughput (LET) is used. The initial LET, Ξ₀, represents the total energy the battery can throughput over its life before reaching its end-of-life capacity (e.g., 80% of initial capacity). Each charging/discharging action consumes this LET. The dynamics are:

$$ \Xi_{k+1} = \Xi_k – |\Delta b_k| $$

$$ s_{k+1} = s_0 \rho + (1-\rho)\frac{\Xi_{k+1}}{\Xi_0}s_0 = s_k – s_0 \frac{1-\rho}{\Xi_0} |\Delta b_k| $$

where Δbk is the net energy change in stage k, and ρ is the retirement capacity ratio. The energy content updates simply as: bk+1 = bk + Δbk, constrained within safe limits [γ₁sk, γ₂sk].

Therefore, the complete state vector for the MDP at the beginning of stage k is:

$$ x_k = (\zeta_k, s_k, b_k) $$

The decision variable ak is the target energy level to achieve by the end of stage k. The available actions depend on the current frequency state ζk:

- If ζk = 1 (Frequency High): The cell energy storage system must absorb energy as required. The only decision is to comply until full or demand ends.

- If ζk = -1 (Frequency Low): The cell energy storage system must supply energy as required. The only decision is to comply until empty or demand ends.

- If ζk = 0 (Frequency Normal): The cell energy storage system can choose any target energy level within its safe operating bounds to prepare for future events. This is the core energy management decision.

Given a state x and an action a, the system transitions to a new state x’ with a probability determined by the frequency state transition matrix and the distributions of regulation energy/sojourn time.

The Markov Decision Process Model

The objective is to find a stationary policy π (a mapping from states to actions) that maximizes the expected total discounted reward over the operational life of the cell energy storage system. The stage reward function, C(x, a), captures all immediate economic outcomes:

$$ C(x, a) = C^{prf} – C^{bs} – C^{pun} – C^{age} $$

The components are defined as follows for a typical stage:

- PFC Service Revenue (Cprf): Compensation for providing the regulation capacity, typically proportional to the contracted power P₀ and the stage duration τ.

$$ C^{prf} = c^{prf} P_0 \tau $$

where cprf is the capacity payment price. - Energy Trading Cost/Revenue (Cbs): Cost incurred from buying electricity from the grid (or revenue from selling) during energy management in the frequency normal state.

$$ C^{bs} = I_{\zeta=0} \left[ I_{a \ge b} c^{buy} \frac{\Delta b}{\eta_c} + I_{a < b} c^{sell} \eta_d \Delta b \right] $$

Here, cbuy/csell are electricity prices, ηc/ηd are charge/discharge efficiencies, and I is an indicator function. - Performance Penalty Cost (Cpun): Penalty for failing to meet the exact regulation energy demand during frequency deviation events due to insufficient stored energy.

$$ C^{pun} = c^{pun} I_{\zeta \ne 0} \left[ |e| – \left( I_{e>0}\frac{1}{\eta_c} + I_{e<0}\eta_d \right) |\Delta b| \right] $$

where cpun is the penalty price and e is the regulation energy demand. - Battery Aging Cost (Cage): Cost associated with the consumption of the battery’s lifecycle throughput.

$$ C^{age} = \frac{c^{con} s_0 |\Delta b|}{\Xi_0} $$

where ccon is the unit capacity investment cost of the cell energy storage system.

The optimal value function V*(x), representing the maximum expected total reward starting from state x, satisfies the Bellman optimality equation:

$$ V^*(x) = \max_{a \in A(x)} \left\{ C(x, a) + \mathbb{E}_{x’ \sim p(\cdot|x,a)} \left[ V^*(x’) \right] \right\} $$

Solving this equation yields the optimal policy π*.

Solving the MDP: The Dimensionality Reduction Parallel Value Iteration (DRPVI) Algorithm

Directly applying standard solution algorithms like Value Iteration or Policy Iteration to this MDP is computationally prohibitive due to the “curse of dimensionality.” The state space is large, being the product of the discrete frequency states, the discretized capacity levels, and the discretized energy content levels. To overcome this, I propose a novel Dimensionality Reduction Parallel Value Iteration (DRPVI) algorithm that exploits the specific structure of the problem.

The key insights are:

- State Space Decomposition: The capacity degradation process is monotonic and irreversible. Therefore, the state space can be naturally layered according to the effective capacity level s. Let layer h contain all states with capacity sh. The transition from a state in layer h can only lead to states in the same or lower (degraded) layers, creating a directed acyclic graph structure.

- Successor State Identification: For a state in layer h, its possible successor states are not the entire state space but are confined to a limited subset of lower layers (h, h-1, …, h-Δh), where Δh depends on the maximum possible capacity degradation in one stage. This drastically reduces the number of terms in the expectation calculation within the Bellman equation.

- Parallel Computation: The value function updates for all states within the same capacity layer are independent of each other, given the values from lower layers. This independence allows for perfect parallelization across states within a layer.

The DRPVI algorithm proceeds as follows:

Step 1: Decompose the state space into H layers based on capacity: χh = {x | s = sh}.

Step 2: Initialize the value function for the terminal layer (where capacity reaches retirement level): V(χ₁) = 0.

Step 3: Iterate backwards from layer h=2 to layer h=H. For each layer, for each state x ∈ χh, compute:

$$ V_{new}(x) = \max_{a \in A(x)} \left\{ C(x, a) + \sum_{x’ \in J(x)} p(x’|x, a) V_{old}(x’) \right\} $$

where J(x) is the identified, limited set of successor states from layers ≤ h. The maximization over actions a is performed to find the optimal decision for state x.

Step 4: Parallelize the inner loop. The computations of Vnew(x) for different x within the same layer χh are distributed across multiple CPU cores.

This algorithm converges in a finite number of passes (equal to the number of layers) and is significantly more efficient than standard algorithms. The reduction in the successor state space and the parallel computation synergistically alleviate the curse of dimensionality.

Results and Analysis of the Optimal Policy

Solving the MDP model via the DRPVI algorithm yields an optimal energy management policy for the cell energy storage system. This policy has the form of a dynamic threshold strategy, which is more sophisticated than static or fixed-threshold strategies found in heuristic approaches. The optimal action in the Frequency Normal state (ζ=0) is defined by a target zone for the state of charge b, relative to the current capacity s.

The structure of this policy reveals crucial insights:

| State Feature | Impact on Optimal Policy Thresholds | Rationale |

|---|---|---|

| Capacity Degradation (sk decreases) | The upper and lower SOC thresholds converge towards the middle. | As the cell energy storage system’s total energy capacity shrinks, maintaining the same absolute headroom becomes impossible. The policy dynamically reduces the target zone to preserve relative bidirectional regulation margin while preventing excessive cycling that would further accelerate degradation. |

| Previous Frequency State (ζk-1) | The target zone shifts. If ζk-1=1, the zone is biased lower (more room to charge). If ζk-1=-1, the zone is biased higher (more room to discharge). | This leverages the second-order Markov property of frequency. Consecutive frequency deviations in the same direction have a non-zero probability. The policy proactively adjusts the SOC to be better prepared for the most likely next demand, improving responsiveness and reducing penalty risks. |

| Current SOC (bk) | If bk is below the lower threshold, the action is to charge to the threshold. If above the upper threshold, the action is to discharge to the threshold. If within, do nothing. | This is the core energy management logic, minimizing unnecessary transactions (and associated costs/losses) while keeping the cell energy storage system primed for action. |

To quantify the benefits, the performance of this MDP-based strategy is compared against several benchmarks using Monte Carlo simulation with real frequency data:

- Strategy A (No Management): The cell energy storage system only responds to frequency deviations and never actively manages its SOC in the deadband. This leads to frequent exhaustion of regulation headroom, severe penalties, and a negative return on investment.

- Strategy B (Fixed 50% SOC Target): The cell energy storage system always charges/discharges to 50% SOC in the deadband. This maintains good regulation capability but incurs high energy trading costs and, most critically, excessively frequent cycling that rapidly degrades the battery, shortening its profitable life.

- Strategy C (Static Double-Threshold): A policy derived from a simpler model with i.i.d. frequency assumptions and no capacity fade. While better than A or B, its static thresholds are suboptimal when faced with correlated demand and a degrading asset.

The MDP-based dynamic strategy significantly outperforms all benchmarks. It increases the net lifetime profit by approximately 30-35% compared to Strategy C. More importantly, it achieves this while maintaining an exceptionally low Frequency Energy Deviation (FED) ratio—the percentage of demanded regulation energy not delivered—on the order of 10⁻⁴%, demonstrating its ability to simultaneously maximize economics and technical performance for the cell energy storage system.

Computational Efficiency of the DRPVI Algorithm

The effectiveness of the proposed DRPVI algorithm is validated by comparing its computation time against a standard Gauss-Seidel Value Iteration (GSVI) algorithm for state spaces of increasing size. The problem dimension is increased by expanding the range of capacity degradation (e.g., from 4.1 MWh to 4.3 MWh initial capacity, retiring at 4.0 MWh).

| Initial Capacity (MWh) | State Space Size | GSVI Time (s) | DRPVI Time (s) | Speedup Ratio |

|---|---|---|---|---|

| 4.1 | Small | 66 | 9 | 7.3 |

| 4.2 | Medium | ~1,200 | 58 | 20.7 |

| 4.3 | Large | >2,500 (est.) | 121 | >23.6 |

The results are clear: while the computation time for the standard GSVI algorithm explodes exponentially with state space size (curse of dimensionality), the time for the DRPVI algorithm increases only moderately. The speedup factor grows with the problem size, confirming that the state space decomposition and successor state identification successfully eliminate vast amounts of redundant computation. This makes solving the high-fidelity MDP model for practical cell energy storage system configurations computationally tractable.

Conclusion and Outlook

In this work, I have formulated the energy management problem for a cell energy storage system providing primary frequency regulation as a Markov Decision Process. The model incorporates the temporal correlation of frequency signals and the dynamic degradation of battery capacity through lifecycle throughput, aligning closely with real-world operational concerns. To solve this high-dimensional MDP efficiently, I developed the Dimensionality Reduction Parallel Value Iteration (DRPVI) algorithm, which leverages the problem’s inherent structure to achieve significant computational speedups.

The resulting optimal policy is a dynamic threshold strategy that intelligently adapts the target state-of-charge zone based on the remaining capacity of the cell energy storage system and the recent history of frequency events. This strategy demonstrably optimizes the trade-off between service revenue, energy arbitrage, performance penalties, and battery aging, leading to a substantial improvement in lifetime profitability compared to conventional static strategies.

This research provides a rigorous and practical framework for maximizing the value of a cell energy storage system in frequency regulation markets. Future work will involve extending the model to co-optimize participation in multiple markets (e.g., energy arbitrage and frequency regulation), incorporating more detailed battery aging models, and adapting the market reward and penalty structure to align with specific regional grid codes and market designs that are emerging worldwide. The core MDP methodology and the DRPVI algorithm, however, establish a strong foundation for the optimal control and profitable operation of cell energy storage systems in the modern, stochastic electricity grid.